AAG Boston 2017 Day 1 wrap up!

/Day 1: Thursday I focused on the organized sessions on uncertainty and context in geographical data and analysis. I’ve found AAGs to be more rewarding if you focus on a theme, rather than jump from session to session. But less steps on the iWatch of course. There are nearly 30 (!) sessions of speakers who were presenting on these topics throughout the conference.

An excellent plenary session on New Developments and Perspectives on Context and Uncertainty started us off, with Mei Po Kwan and Michael Goodchild providing overviews. We need to create reliable geographical knowledge in the face of the challenges brought up by uncertainty and context, for example: people and animals move through space, phenomena are multi-scaled in space and time, data is heterogeneous, making our creation of knowledge difficult. There were sessions focusing on sampling, modeling, & patterns, on remote sensing (mine), on planning and sea level rise, on health research, on urban context and mobility, and on big data, data context, data fusion, and visualization of uncertainty. What a day! All of this is necessarily interdisciplinary. Here are some quick insights from the keynotes.

Mei Po Kwan focused on uncertainty and context in space and time:

- We all know about the MAUP concept, what about the parallel with time? The MTUP: modifiable temporal unit problem.

- Time is very complex. There are many characteristics of time and change: momentary, time-lagged response, episodic, duration, cumulative exposure

- sub-discussion: change has patterns as well - changes can be clumpy in space and time.

- How do we aggregate, segment and bound spatial-temporal data in order to understand process?

- The basic message is that you must really understand uncertainty: Neighborhood effects can be overestimated if you don’t include uncertainty.

As expected, Michael Goodchild gave a master class in context and uncertainty. No one else can deliver such complex material so clearly, with a mix of theory and common sense. Inspiring. Anyway, he talked about:

- Data are a source of context:

- Vertical context – other things that are known about a location, that might predict what happens and help us understand the location;

- Horizontal context – things about neighborhoods that might help us understand what is going on.

- Both of these aspects have associated uncertainties, which complicate analyses.

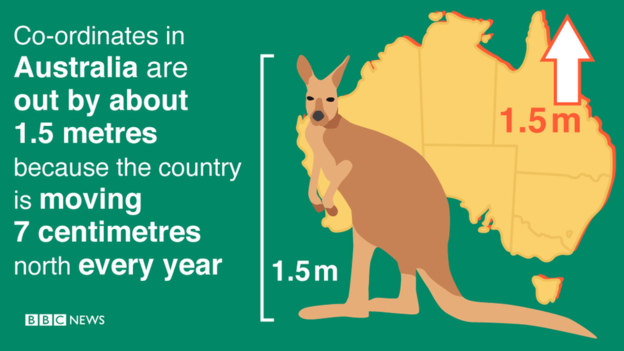

- Why is geospatial data uncertain?

- Location measurement is uncertain

- Any integration of location is also uncertain

- Observations are non-replicable

- Loss of spatial detail

- Conceptual uncertainty

- This is the paradox. We have abundant sources of spatial data, they are potentially useful. Yet all of them are subject to myriad types of uncertainty. In addition, the conceptual definition of context is fraught with uncertainty.

- He then talked about some tools for dealing with uncertainty, such as areal interpolation, and spatial convolution.

- He finished with some research directions, including focusing on behavior and pattern, better ways of addressing confidentiality, and development of a better suite of tools that include uncertainty.

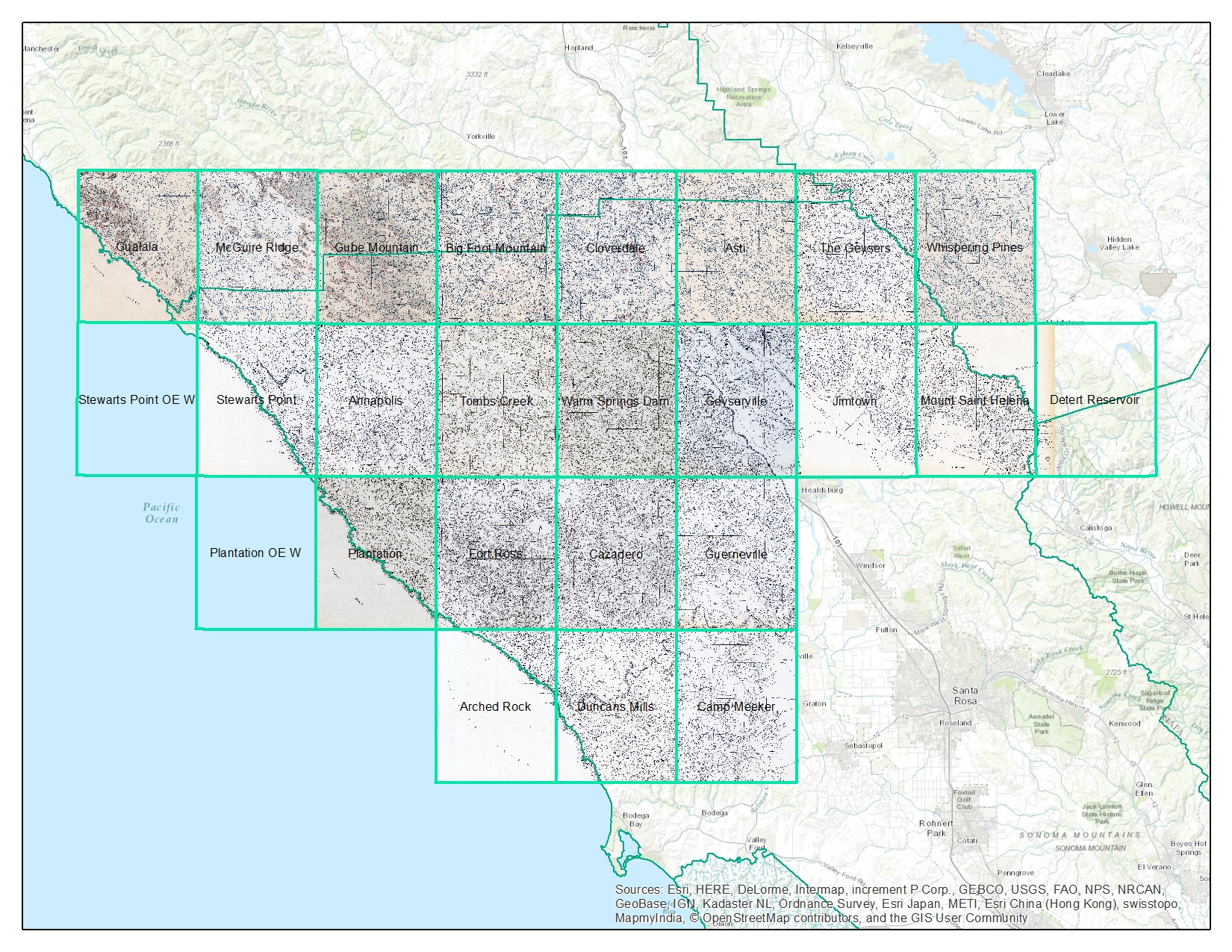

My session went well. I chaired a session on uncertainty and context in remote sensing with 4 great talks from Devin White and Dave Kelbe from Oak Ridge NL who did a pair of talks on ORNL work in photogrammetry and stereo imagery, Corrine Coakley from Kent State who is working on reconstructing ancient river terraces, and Chris Amante from the great CU who is developing uncertainty-embedded bathy-topo products. My talk was on uncertainty in lidar inputs to fire models, and I got a great question from Mark Fonstad about the real independence of errors – as in canopy height and canopy base height are likely correlated, so aren’t their errors? Why do you treat them as independent? Which kind of blew my mind, but Qinghua Guo stepped in with some helpful words about the difficulties of sampling from a joint probability distribution in Monte Carlo simulations, etc.

Plus we had some great times with Jacob, Leo, Yanjun and the Green Valley International crew who were showcasing their series of Lidar instruments and software. Good times for all!